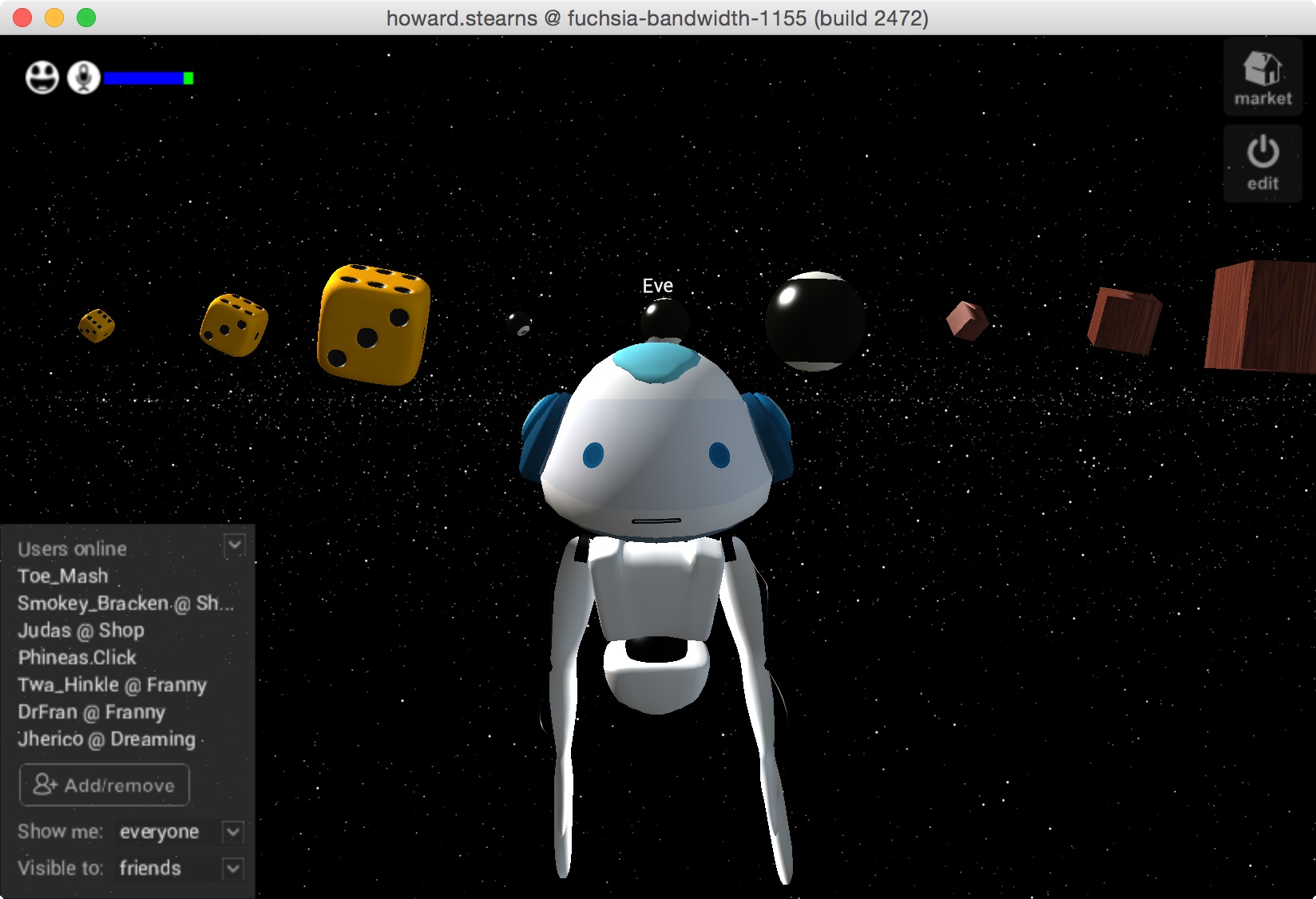

We do a lot of live demos. Our VR software is in alpha and we sometimes run into bugs, but we pride ourselves on showing the true state of things instead of slides and videos. It’s often a build with radical changes created just a few moments before. And yet the most common trouble is the unpredictable network connectivity one finds at demo venues. Our folks have done quite a few tethered to a cell phone.

But this demo from Sony is in a whole other class. Ouch. I’m sure we’ll all have great hand controllers within a year or so, but right now, hand controller hardware really sucks.

By the way, I think their IK looks great, and I especially like the jump. But notice that the avatars are stuck on a pedestal instead of moving around. I haven’t seen anyone combine IK with artist-designed animations the way we have.